Everything is moving toward evidence-based decision making. In a recent survey of business professionals, 58% say analytics is playing an integral role. They rely on that information to make their business more competitive (46%), target new customers (36%), and evaluate employees (29%).

What is Business Intelligence?

It is the process of mining raw data and statistics in search of actionable knowledge. Things that guide the business in making the best decision possible. By definition, business intelligence needs to be three things:

- Intelligent

- Strategic

- Predictive

An example of business intelligence can be found in casinos. Credit card companies charge interchange fees on transactions and those fees can be traced back to different locations and services within the casino. Using these, the casinos can tell customer activity from purchases. What did they buy first? Did they buy food first or gifts from the gift store? Looking at the fees gives the casinos insight into the behavior patterns of their best customers. Don’t assume the stories that the data will tell you. Instead, use data mining to discover emerging trends

Data Warehouse

The extracting, loading, and cleaning data never changes. It’s a continual process. However, we still have to model the data into something useful with various demographics, geographic, and sales data.

Forecasting is getting increasingly sophisticated. These days, Chili’s can text 20% off coupons based on GPS info. To be successful, we need to look at the outliers and how they are performing. Are the numbers of 70 years old riding bikes increasing or decreasing? If so, should we produce a senior friendly bike? As you can tell, marketing plays a part in Business Intelligence.

There are different audiences for summary and aggregate data. Executives care about aggregate, high-level data while line workers care about transactional reports and finding the exceptions.

With Performance Management, we put together different buckets of data from a customer pont of view, an employee point of view, etc. Each has different key performance indicators (KPI) and we use dashboards and scorecards as tools to arrange leading and lagging KPIs together.

BI Maturity Model

- Some Reporting

- Ad-Hoc Reports / Drill Down Reporting

- Performance Management

- Predictive Analytics – SAS, SSAS, Pentaho

- Competitive Analytics

Data warehouse – Central repository of information from various lines of business applications to support enterprise decision-making

Goal of a data warehouse is to provide timely, accurate, and governed information. Yesterday’s data, along with 10 years of data is important for comparison’s sake. What is daily to you, might be monthly to someone else. For tax data, need that year’s worth of data. How important is just yesterday?

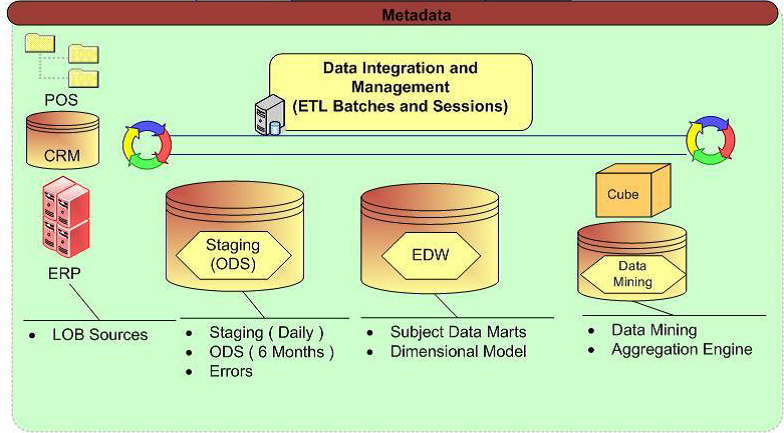

Common Components and Processes

- Staging Area

- Operational Data Store (ODS)

- Dimensional Model (Kimball)

- Cube Technology

- ETL

Staging Environment

- Houses data from required source systems. Sometimes an exact copy, but most often a query to target model

- Not always up to date (interval pulls)

- Goal is to not compromise performance on source systems

- Each Source System Extract may be at different times

- This process is only the “E&L” of ETL

Have to fix the data from the source tables.

Bob Jones = JONES, R. = Robert Jo nes

Need to have ways to standardize the data

Stage data in a repository from different data sources – POS, CRM, ERP

Latency matters. Look at the frequency of refreshes on source tables.

Operational Data Store (ODS) –

- Typically the integration from all the source systems

- Denormalizing things from 3rd normal form into dimensions

- Contains clean data that has been scrubbed through the ETL process against business rules and various technical rules and constraints. Much more to come in our ETL class

- The important process loading this is the “T,” in ETL

Pull just what we can. Has the detail behind the EDW.

Staging area and ODS can be the same or different.

Staging area – Pull only what you need. If you just need 3 years, don’t pull 5 years. Use the staging area to eliminate any errors.

Get the data off the production servers and move them to the reporting servers

Enterprise Data Warehouse (EDW) Environment

- Houses multiple subject areas across the enterprise. Vault Data Modeling

- Typically a Dimensional Model (Constellation)

- Additional Aggregated tables may be present.

- Contains all required history

- Type of historical changes enforced through slowly changing dimensions, and fact table type – Primary source of trouble

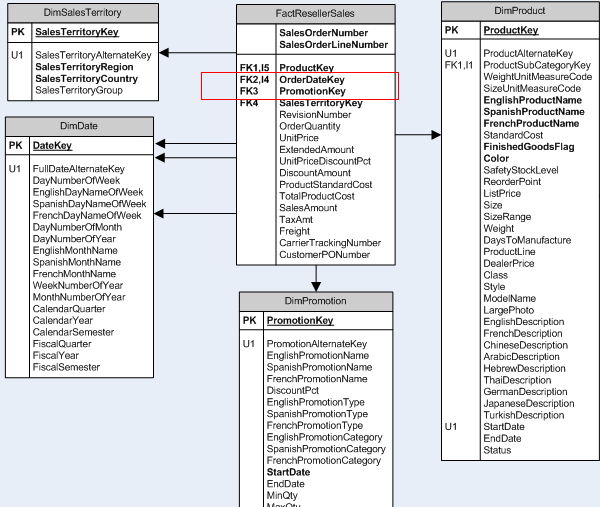

Kimball’s Dimensional Model – used to spot slowly changing attributes

- Facts and dimensions (categories)

- Making the data easy to query. Star schema example shown below.

** Vault Data Modeling – Every row in a Data Vault must be accompanied by record source and load date attributes, enabling an auditor to trace values back to the source.

Cube

- Allows you to grab data by grabbing certain key fields

- Utilizes an Aggregation Engine for pre-calculations and indexing. Has to be processed.

- An extension of the data warehouse

- Typically Sourced from the Dimensional Model

- Provides Dimensional Analysis or Pivoting across dimensional members (Cross-Drill) and measures across time periods

- Ideal for comparing time range calculations (Daily, Monthly, Annually)

Aggregation can be done into separate tables for daily, monthly, and annual numbers. For cubing, Teradata is good at crunching numbers. No different from what Big Data is doing. Distributing the work to many servers, many processors.

Needs for a Data Warehouse

- The OLTP source systems are archiving and purging data without tracking history.

- Users are complaining about duplicate and inaccurate information from current reporting environment.

- The data that is currently reported on from existing reporting environment is slow and users are unable to get to the analytics across multiple areas within the organization.

- Important to catch errors in staging – “If it’s not trusted, nobody is going to use it.”

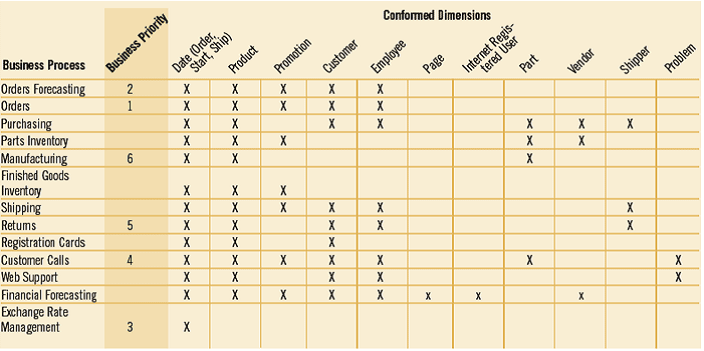

Gathering Requirements

- Interview Department team members.

- Document Business Processes using any BPM tool.

- Information and Data Profiling to support business case from the user. Is the data actually available and does it support the Business process Model? An example would be – can we actually capture sales information for each product over a specific period of time?

- What resources and tools will be needed for the project?

- Create BUS Diagram – Match Business Process to Conformed Dimensions

Create Project Plan with Sponsorship

- Establish the Charter and Sponsors (very critical to success). Support for Business Intelligence Competency Center (BICC)

- Setup Steering Committee, especially if funds for the data warehouse is spread across multiple organizations. Important for the initial launch. Research the data points and find out the latency. Make sure that everyone understands the tools.

- Agile –vs-Waterfall approach. Really it does not matter which, just show value early and often.

- Waterfall – Impossible to nail down all the requirements ahead of time. Requirements evolve over time.

- Test, Test, Test

Education and Training

- Data warehouse is just data without visualization. Sponsors get very anxious when the ETL takes approximately 80% of the time and money spent in a Data Warehouse development and they don’t have visualization into it.

- Educate the users that will access the data warehouse on the new technologies. Don’t let the complexity of the tool compromise the success of the data warehouse.

- Kimball methodology is common and easy to learn.

- Include analysts, DBAs, power users, etc.

- Use the test cases, stories, as an opportunity to get the users involved early.

ODS and EDW can quickly run into capacity constraints. It’s up to the owner of the Data Warehouse to keep an eye on this.

Metadata – Data about data –Too Ambiguous

- Operational (ETL): Source to target mapping, load times, Number of received during load. Error counts.

- Business: Describes the Data Warehouse Components in Business terms. Net Sales, Net Sales = Sales –Expenses

- Technical: Data describing the technical aspects of the data warehouse. Example: msrnetsales, DimAsset, tblassetgroup, procloaddimasset.